Make sure the package type says pre-built for Hadoop 2.7 and later.Īs of writing this post (February 2017) the machine learning add-ins didn’t work in Spark 2.0. If possible, make sure Anaconda is saved to the following folder:Īdd an environment variable called PYTHONPATH and give it the value of where Anaconda is saved to:ĭownload the latest version of Spark from the following link. Download a version of Anaconda that uses Python 3.5 or less from the following link (I downloaded Anaconda3-4.2.0): You can check permissions with the following command: winutils.exe ls \tmp\hiveĪt the moment (February 2017), Spark does not work with Python 3.6. Run the following line in Command Prompt to put permissions onto the hive directory: winutils.exe chmod -R 777 C:\tmp\hive C:\Program Files\Java\jdk1.8.0_121):ĭownload the msi and run it on the machine.Ĭreate an environment variable called SCALA_HOME and point it to the directory where Scala has been installed:ĭownload the winutils.exe binary from this location:Ĭreate the following folder and save winutils.exe to it:Ĭreate an environment variable called HADOOP_HOME and give it the value C:\hadoop:Įdit the PATH environment variable to include %HADOOP_HOME%\bin:Ĭreate a folder called hive in the following location:

Once downloaded, copy the jdk folder to C:\Program files\Java:Ĭreate an environment variable called JAVA_HOME and set the value to the folder with the jdk in it (e.g. If not, download the jdk from the following link: Make sure you have the latest version of Java installed if you do, you should have the latest version of the Java jdk. In system Properties, click on Environment Variables… In the system pane, click on Advanced system settings: In Control Panel, click on System and Security: Right click on the Start button and choose Control Panel:

#How to install spark how to

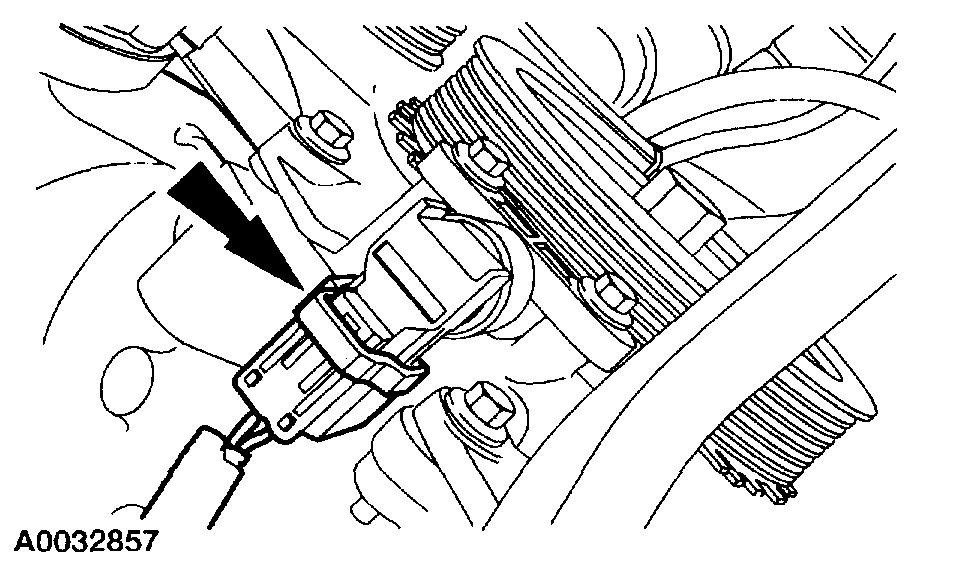

The following shows how to create an environment variable in Windows 10: To install Spark you need to add a number of environment variables.

Installing Spark requires adding a number of environment variables so there is a small section at the beginning explaining how to add an environment variable Create Environment Variables Install Anaconda – as an IDE for Python development.Installing Spark consists of the following stages: Other IDEs for Python development are available. The blog uses Jupyter Notebooks installed through Anaconda, to provide an IDE for Python development.

#How to install spark windows 10

This blog explains how to install Spark on a standalone Windows 10 machine. Whilst you won’t get the benefits of parallel processing associated with running Spark on a cluster, installing it on a standalone machine does provide a nice testing environment to test new code. It is possible to install Spark on a standalone machine.